It’s been one month since the midterm elections, and a lot of people are still smarting from the results. But several folks saw this coming. As expected, the Republican Party has retained control of the House of Representatives and taken the Senate. But, few anticipated the GOP would garner such high numbers. Moreover, Republicans have attained most of the state governorships. Here in Texas, the GOP has won every major state-level office for the fifth consecutive election cycle. A Democrat hasn’t won a state-wide office since 1994, when Garry Mauro won reelection as State Treasurer. By the time the current crop of officeholders finish their respective terms, Democrats will have been shut out of state-level offices for two decades.

Texas Democrats had hoped this year would be theirs; that they would recapture at least one office, preferably the governorship, but at least maybe attorney general or state treasurer. But they didn’t. They lost – in the worst way. As usual, most voting-eligible Texans failed to turn up at the voting booths this year. In fact, Texas had the lowest rate of voter turnout than any state in the union – roughly 4.75 million people, or 28.5% of the vote-eligible population. In the Dallas – Fort Worth metropolitan area, some local officials blamed the damp, cold weather for the dismal response. Really? I recall an election in India several years ago where some people were being carried in on their deathbeds – literally! – to cast a ballot. Overall this year’s midterm produced the worst voter turnout since 1942. That particular year was understandable: the U.S. had just entered World War II, when many young men had already joined the fight overseas. Men were much more likely to vote than women back then, plus there were a slew of voter restriction laws – especially in the Southeast – to keep poor and non-White voters from casting ballots. But that was then; things have changed considerably in 70 years. I don’t just find the low voter response appalling. I find it disgusting. What happened?

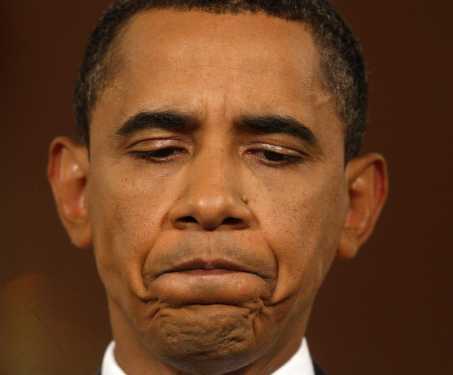

After the 2007 – 2008 financial downturn – a period in which the U.S. came as close to a completed and total economic collapse – people felt their elected officials simply weren’t responding to their needs. President Obama and the Democrats inexplicably focused their energy on passing a healthcare bill and reforming the immigration system. The latter was labeled an attempt to appeal to Hispanics; once again, assuming Hispanics only care about immigration in the same way women only care about abortion and gays and lesbians only care about same-sex marriage. Sweeping assumptions like that are an insult and always dangerous. It’s bad enough, though, the Republican Party was determined from the moment Obama won the 2008 presidential elections to obstruct his agenda. Every conservative lout from Dick Cheney to Mitch McConnell stated publicly and emphatically they wanted to make Obama a “one-term president.” Fortunately, they failed. But they and the Democrats have failed miserably over just about everything, mainly the economy.

My gripe with Obama is his overt willingness to compromise. His first major capitulation to the Republicans came in December 2010, as the Bush-era tax cuts were due to expire. The GOP literally threatened to withhold votes on extending benefits for the long-term unemployed, if tax cuts for the wealthiest citizens and largest corporations weren’t kept in place. Obama bowed to them; declaring openly that he didn’t want “the hostages” to be harmed. In December 2010, the Democrats still held majorities in both houses of Congress, and the President could have very well issued an executive order extending the benefits in question. But he backed down. And that’s when I began to lose respect for him – in the same way I’d long lost respect for most elected officials.

In 1934, when President Franklin D. Roosevelt realized just how bad the Great Depression really was, he made the bold – and shocking – decision to raise taxes on the wealthiest citizens and largest corporations (the same ones who benefited from hefty Republican-spawned tax breaks the previous decade) to stimulate the economy. He convinced these people that such hikes would benefit them, too, in that more Americans would be able to enter the workforce and pay their own taxes, plus have money left over to buy goods produced by a variety of industries. It made sense. The policy worked to some extent, but the 1929 collapse had been so bad, the positive effects weren’t immediate. Thus, economists and the politicians who think they know so much have been debating the logic of this move ever since.

The Democrats failed on another front regarding the economy. U.S. Attorney General Eric Holder never indicted anyone in connection with the recent financial calamity. Anyone with at least half a brain knows it didn’t happen by chance. It wasn’t the inevitable result of market ups and downs. Major banking entities such as Citigroup and Fannie Mae were key players in the debacle. Both helped to create the massive housing bubble at the turn of the century, replete with outrageous features such as zero-down purchases and mortgage-backed securities. As the crisis worsened towards the end of 2008, Citigroup managed to convince the federal government to give it a life-saving multi-billion-dollar loan. But it also began laying off people at its various offices across the globe. Fannie Mae, along with Freddie Mac, also received a multi-billion-dollar, taxpayer-funded bailout; this one in 2009. Yet that investment didn’t become profitable for taxpayers until this year. The average American worker hasn’t seen a lot of positive returns on their “investments” to save the “too-big-to-fail” banks. Those lounging in the economic ivory tower certainly have. For example, Lloyd Blankfein, CEO of Goldman Sachs, received a $16.2 million compensation package for 2011, despite a serious drop in corporate profits. The following year Blankfein urged Americans to consider a later retirement age.

“You can look at history of these things,” he told CBS News, “and Social Security wasn’t devised to be a system that supported you for a 30-year retirement after a 25-year career. … So there will be things that, you know, the retirement age has to be changed, maybe some of the benefits have to be affected, maybe some of the inflation adjustments have to be revised. But in general, entitlements have to be slowed down and contained.”

How thoughtful. In February of 2009, two months after I earned my college degree, the engineering company where I worked held their annuals employee reviews. Due to budget crunches, my then-manager told me, they couldn’t afford significant salary increases. So, while my living expenses continued to rise, my salary essentially remained flat. I was laid off the following year.

People like Blankfein are part of the current problem of wealth inequality in America. That the Obama Administration neglected to prosecute the scoundrels responsible for all those business closings and job losses – again, this didn’t happen by accident – is reprehensible. If I rob a convenience store of a hundred bucks and get caught, I’d be sure to serve some serious prison time. Hedge-fund managers who manipulated the stock market seem immune to the most egregious of financial indiscretions.

Still, the economy has rebounded since Obama first took office. The unemployment rate, which reached a high of 9.9% in April 2010, now stands at 5.8%. GDP growth stood at negative 5.4% in the first quarter of 2009 and is now at 3.9%. The national deficit was $1.4 trillion in 2009 and now is $564 billion. That all brings up yet another complaint. Why didn’t the Democrats highlight those facts? In his 2012 reelection bid, Obama proclaimed, “Bin Laden is dead, and GM is alive.”

It was simple, yet effective. For many of us, though, the economy really hasn’t recovered. Wages remain stagnant, and jobs are tenuous. We’ve become a contract society.

Moreover, I don’t really blame many people for not voting. I understand the frustration with the hollow words and obstinacy of some candidates. Wendy Davis, for example, began her campaign for Texas governor by attacking her opponent, Greg Abbott, instead of highlighting her own accomplishments. I think it was about 6 months into the campaign before she ran a more positive ad; one telling her life story. Voters really get put off by such animosity from the start. Criticizing the opposition is a dubious tactic. It’s almost as if the individual is hiding something nefarious about their own past. That’s essentially how George W. Bush won his two presidential terms: he had no redeeming qualities, so his campaign team attacked the other guys. And some voters fell for it.

I don’t know what the immediate future holds for this country. With Republicans now in control of the U.S. Congress, I foresee further adolescent bickering between people who are otherwise educated business professionals. I don’t envision economic improvements or tax relief for us regular folks. It’s depressing. That Mars One venture is looking more and more attractive.