“All mothers are working mothers.”

Filed under News

“Those who dwell among the beauties and mysteries of the Earth are never alone or weary of life.”

Filed under News

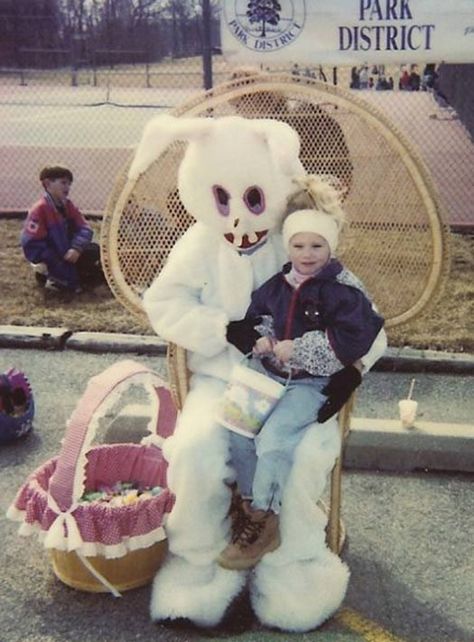

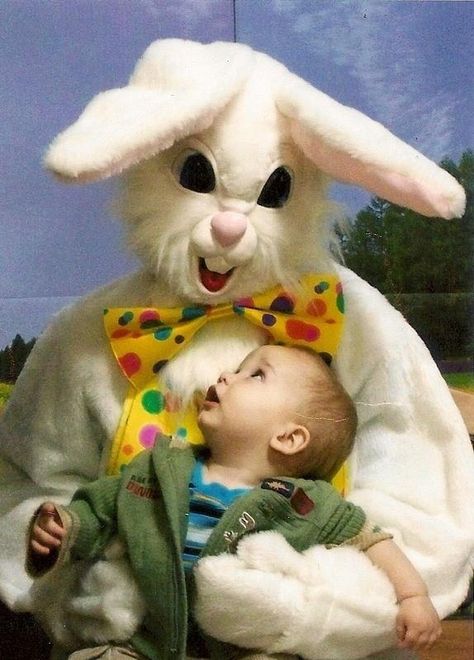

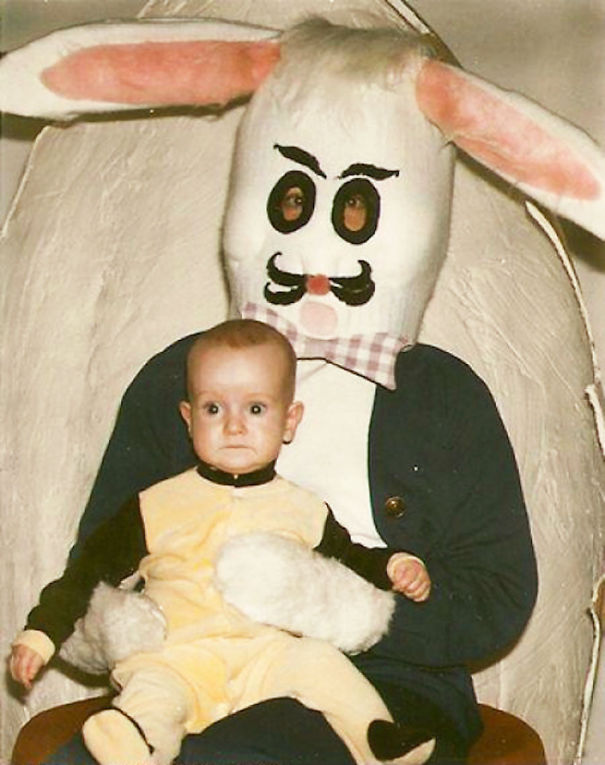

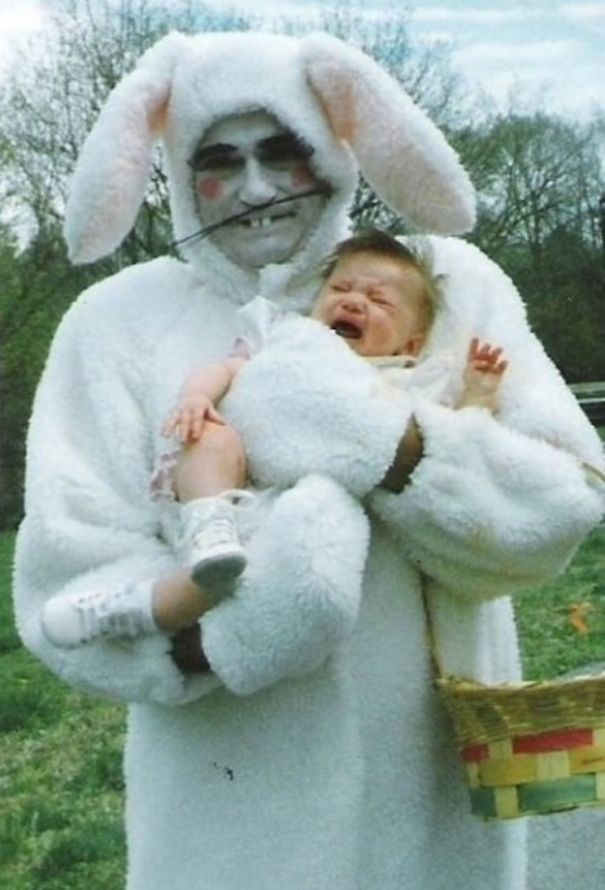

In case you hadn’t realized it, Dear Readers, Santa Claus and Halloween clowns aren’t the only holiday figures that can boast unnerving images. Easter bunnies hold a considerable share of macabre visages. After all, what mammal besides a platypus do you know lays eggs? Of course, the platypus is trying to procreate. The Easter bunny seems to have more nefarious intentions – they hide their eggs and convince innocent little kids to look for them. Who does that?!

And, if you aren’t sufficiently alarmed by these photos, here’s Liam Neeson adding to the trauma:

Top image: Dave Whamond

Filed under Curiosities

“We are told to let our light shine, and if it does, we won’t need to tell anybody it does. Lighthouses don’t fire cannons to call attention to their shining – they just shine.”

It’s been nearly two years since the U.S. Supreme Court outlawed abortion and left it up to individual states to decide whether or not women should be able to decide what to do with their bodies. The Dobbs decision sent proverbial shock waves throughout the American conscience. For the first time in modern judicial history, a fundamental right was snatched away by a band of elitists who – like most extremists – feel they know what’s best for everyone else.

Now another abortion-related issue has come before the Court: whether mifepristone is legal or not. Basically this medication induces abortion without an individual having to visit a clinic. Recently the U.S. Food and Drug Administration expanded approval of the drug. That incited the ire of Alliance for Hippocratic Medicine, a conservative anti-abortion group that forced the matter onto the plate of the High Court. If the Dobbs decision is any precedent, things don’t look good for mifepristone.

I might have one solution to the overall problem of unwanted pregnancies: tax-free condoms. Even before I entered my teens, my father put the fear of the Almighty into my brain – never trust a girl when she says she’s on birth control. Of course, women should never trust a man when he says she can quit her job because he’ll make her his queen, but that’s a different dilemma.

To many men wearing condoms is comparative to showering while wearing a raincoat. (Points to anyone who has actually heard that firsthand.) But, as we saw with the AIDS epidemic, condoms are a safeguard. Personally I’m tired of hearing men say that birth control is a woman’s responsibility. A real man takes charge of his own birth control.

Unexpected pregnancies present more than a few challenges to an individual female. Children who come into the world unplanned and unwanted often end up being unloved; thus, they often become society’s problem. Two decades ago economists Steve Levitt and John Donohue hypothesized that a reduction in crime in the 1990s was one effect of the 1973 Roe v. Wade decision that legalized abortion nationwide. A strong economy and a greater presence of law enforcement, especially in major metropolitan areas, were also counted as dominating factors. But it was the abortion connection that prompted the most controversy – and greatest outrage. Liberals opined that abortion provided women with greater autonomy over their own health care, while conservatives pointed to a reversal of liberal social policies beginning in the 1980s as the primary reason for a reduction in criminal behavior. Either of these theories bears some truth.

Another interesting result of the Dobbs decision is the sudden rise in vasectomies here in the U.S. Perhaps some men are finally getting the hint that they also have reproductive choices. Institutes from the Cleveland Clinic to Planned Parenthood are noting an increase in vasectomies. It’s both logical and practical.

But I still think eliminating taxes on condoms will provoke younger and/or single men to buy and use them. As of now, I don’t know of any state that maintains this practice, but I still feel it would be worth the trouble. States will garner tax revenue on a slew of other products anyway. I’m fully aware condoms are not a panacea to solve unwanted pregnancies; no form of birth control outside of abstinence is. But, just as with the foolishness of “Just Say No”, abstinence only blanket ideology isn’t reasonable either. Children cost money – as any parent can tell us. They should be a blessing, not a burden.

Filed under Essays

This is a great essay by fellow blogger Valentine Logar who never holds back, yet does it with style and class. Thanks, Val!

Filed under Essays

The image above represents something very important to me and I’m sure to most working people. For the first time since about 2000 I have no credit card debt. I paid off my last outstanding credit card in February and felt so ecstatic I almost had an orgasm! Key word – “almost”. But it’s still a great feeling. Credit card debt has been one of my vices – along with alcohol and road rage. Then again credit card debt has been the vice of many Americans. Currently Americans hold approximately USD 1.13 trillion in credit card debt; an expense that worsened with the 2008 economic downturn and even more so with the COVID-19 pandemic. (Ironically a Republican was president of the United States at the start of each fiasco, which may or may not factor into it – but that’s a different matter.)

I remember paying off a massive amount of credit card debt in 1998, along with the truck loan I had at the time. And I was able to stay debt free until I lost my job at a bank in 2001. Odd how those two things often coincide, isn’t it?

I always used to tell myself I just needed to earn more money. I struggled constantly when I worked for that bank and I kept saying I should return to college and earn a degree. I finally did that in 2007. But after graduating in December 2008, the engineering company I was working for then couldn’t afford much in the way of salary increases because of – wait for it – the sudden economic downturn. Damn! Then I got laid off in the fall of 2010 and struggled somewhat as I tried to make my freelance technical writing career flourish.

But by then, I’d learned an even more important lesson: you don’t always solve money problems with money. Indeed some people earn six and seven figure annual salaries and are always in debt. It’s true, for the most part, that middle class incomes have shrunk considerably since the late 1970s; that is, in relation to the overall cost of living. A few years ago economic statisticians finally confirmed what the rest of us lowly working class drones already knew – “trickle down” economics doesn’t work! It never has and it never will. Yet conservative politicians keep pushing that theory onto the masses, and many people keep falling for it. That’s why I say my brain is too big to be conservative – with all due respect to my conservative friends and relatives.

In high school I was forced to take algebra and geometry and later wondered what purpose either discipline served. Other than knowing the shortest distance between two objects is a straight line, I feel that the ability to balance a checkbook and figure out percentages (so you know how much to tip the bartender) are the only truly essential math. Budgeting should be included. It’s good to know how long a light year is, but it’s more important to realize that it’s not worth having a savings account if you have more in credit card debt. Two plus two is so hard for some folks to figure out.

Regardless I’m glad I don’t have to wait for that zombie apocalypse to wipe out my credit card debt. Reality is often better.

Filed under Essays